Single layer classification example

Problem statement

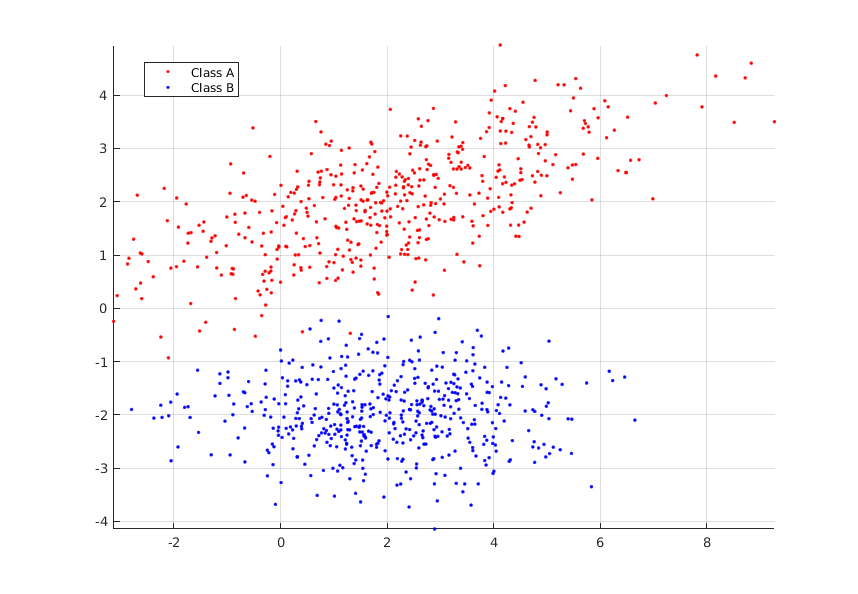

In this example, we consider a dataset where each input vector \( X = ( x , y) \) is assocated to a class A or B respectively with values of +1 and -1. The following figure illustrates the classification problem:

Network architecture

The single layer architecture is the following:

As we need to distinguish class A from class B, we have to use an activation function that can separate classes. In this example, hyperbolic tangent has been selected:

The choice of hyperbolic tangent is motivated by the fact that this function output a value between -1 and +1. Output can be interpretated in two ways, in terme of binary classes (A or B) or in term of probabilities.

Binary interpretation

To determine if the sample belongs to class A or B, ones can specify the following rule: positive outputs belongs to class A, while negative to class B. Mathematicaly, we add the following function after the output of the network:

- \( o=+1 \) when tanh is positive

- \( o=-1 \) when tanh is negative

Probabilistic interpretation

The second option to interpret the output of the network is to consider it as a probability to belonging to classes A or B. When the output is equal to +1, the probability for the sample to be classify in class A or B is respectively one and zero. The following equations generalize this concept, and convert the network output into probablities:

Probablity to be in class A:

$$ p_A = \frac{o+1}{2} $$

Probablity to be in class B:

$$ p_B = \frac{1-o}{2} $$

Note that sum of probablities is always equal to one ( \( p_A + p_B = 1\) ).

Results

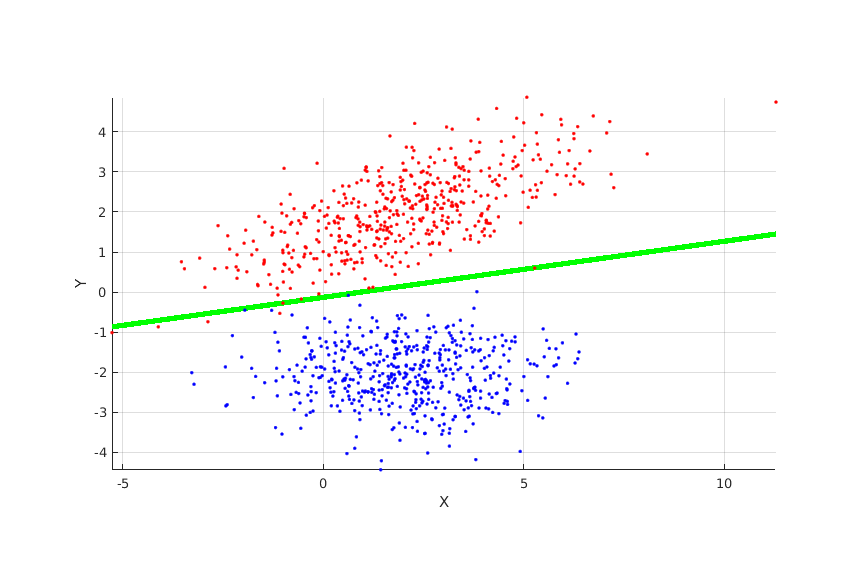

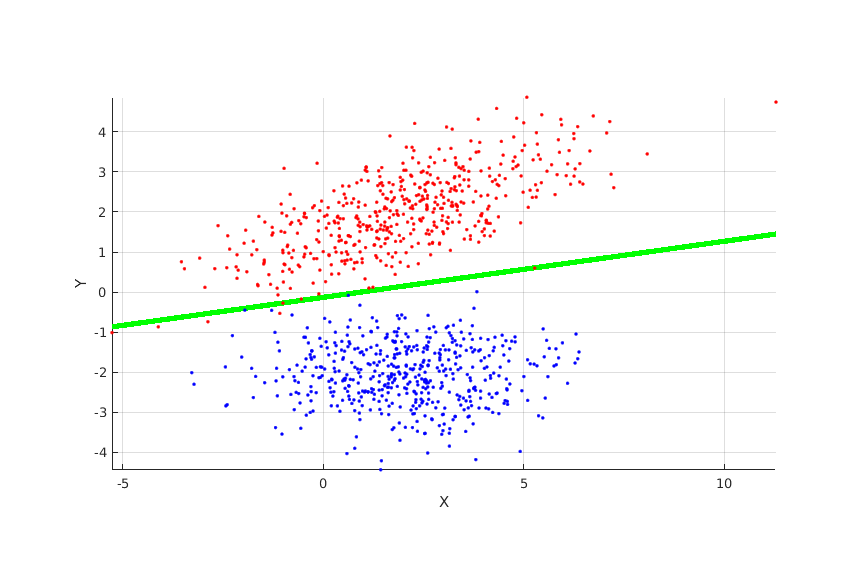

The following figures shows how the space is splitted to separate classes:

The following figures is an overview of training results.

- The surface is the raw output of the network.

- Red dots are points in training dataset belonging to class A.

- Blue dots are points in training dataset belonging to class B.

- Green circle are well classified points.

- Black cross are badly classified points.

Source code and download

See also

- Neural networks curve fitting

- Datasets for deep learning

- Gradient descent example

- How popular are neural networks over the years?

- Install TensorFlow and Keras for Linux

- Learning rule demonstration

- Linear regression example

- Most popular activation functions for deep learning

- Most relevant deep learning research papers

- Neural Network Perceptron

- Simplest neural network with TensorFlow

- Simplest perceptron

- Single layer training algorithm

- Gradient descent for neural networks

- Single layer limitations

- Neural networks